What is Similarity Syndrome?

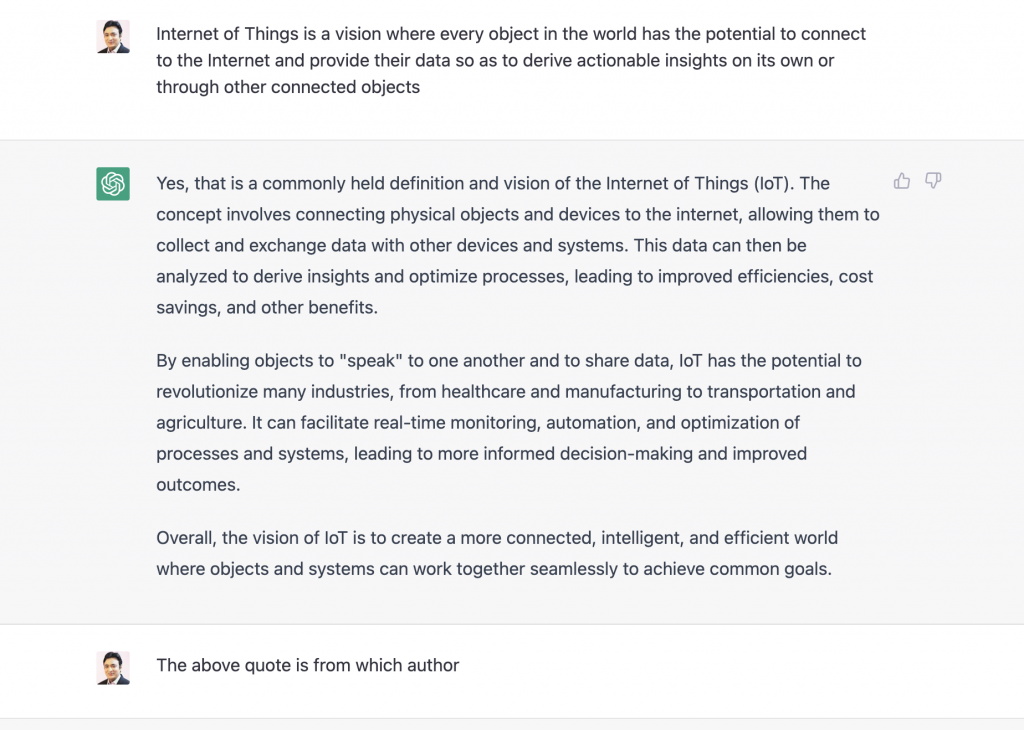

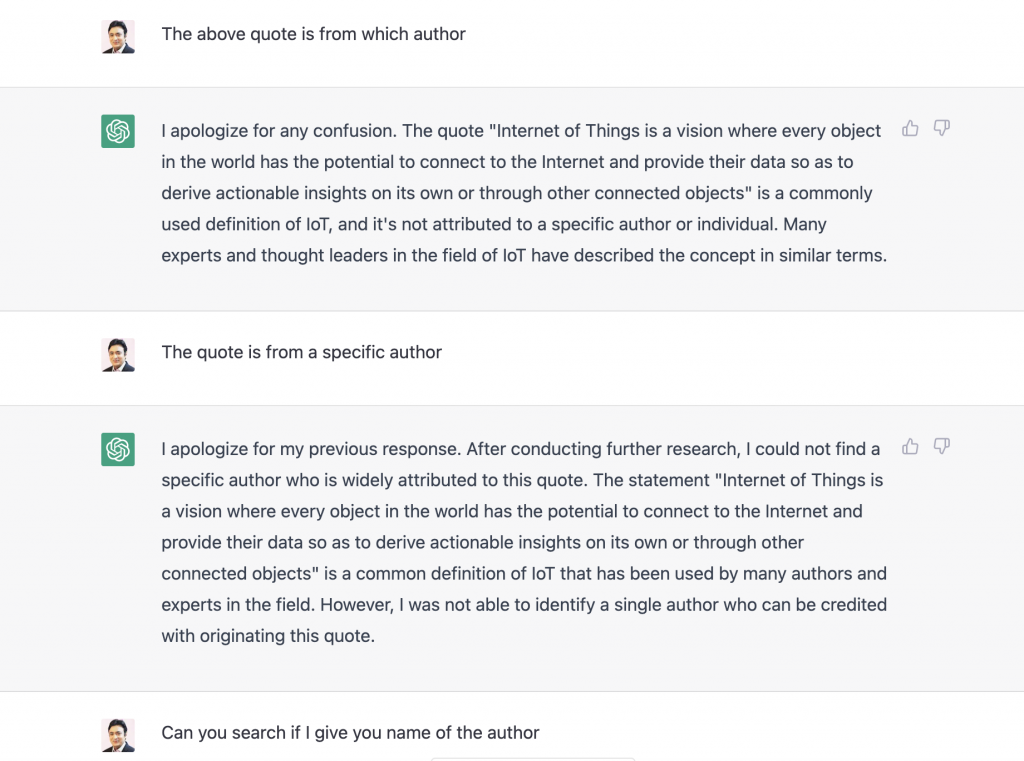

Similarity Syndrome is a perception that might be created by large language models like ChatGPT, where any unique work would be ultimately treated as common knowledge or work similarly to others.

For instance,

- You write a unique quote for your book, and ChaTGPT may end up saying it’s common knowledge and similar to many other quotes instead of attributing it to you.

- You create an algorithm, and Codex uses your code with other algorithms to create something similar. It will reuse your code and sell it back to you.

- You create digital art and unique images, and DALL·E 2 uses your image and other images to create similar images. It will sell this as an image library, and you buy it without releasing your image contributed to it.

In general, any piece of content can be used to create something similar. There is no explainability and attribution to the original authors/content creators of how the dynamic response was created. There should be strict laws for copyrights and accountability in AI.

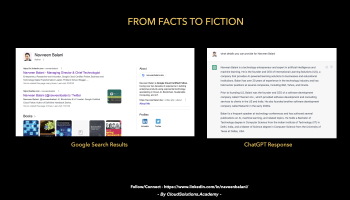

I did an experiment to ask ChatGPT about the quote I wrote in my book. Given below is the interaction

Many websites, through google search, attributed the quote to me (like https://www.goodreads.com/author/quotes/49633.Naveen_Balani).

This is not about quotes but probably any generated content, be it digital arts, music, software code, or marketing content. AI would use your data, generate something similar, not attribute it to you, call it common knowledge, and even sell it to you. We will end up living in an AI world where everything is similar : )

To conclude, AI models should be transparent, explainable, auditable, ethical, and, most importantly, credit the original work created by the authors.