This article is part of IoT Architecture Series – https://navveenbalani.dev/index.php/articles/internet-of-things-architecture-components-and-stack-view/.

Core Platform Layer

The core platform layer provides a set of capabilities to connect, collect, monitor and control millions of devices. Let’s look at each of the components of the core platform layer.

Protocol Gateway

An Enterprise IoT platform typically supports one protocol end to end like AMQP or MQTT as part of the overall stack. However in an IoT landscape, where there are no standardized protocols where all vendors can converge on, an Enterprise IoT stack needs to provide support for commonly used protocols, industry protocols, and support for evolving standards in future.

One option is to use a device gateway that we had discussed earlier to convert device proprietary protocols to the protocol supported by the platform for communication, but that may not always be possible as devices may connect directly or the device gateway may not support the protocol supported by the IoT stack. In order to support handling multiple protocols, a protocol gateway is used, which does the conversion of the protocol supported by your IoT stack. Building an abstraction like protocol gateway would make it easier to support different protocols in future.

The protocol gateway layer provides connectivity to the devices over protocols supported by the IoT stack. Typically the communication is channelized to the messaging platform or middleware like MQTT or AMQP. The protocol gateway layer can also act as a facade for supporting different protocols, performing conversions across protocols and handing off the implementation to corresponding IoT messaging platform.

IoT Messaging Middleware

Messaging middleware is a software or an appliance that allows senders (publishers) and receivers (consumer’s consuming the information) to distribute messages in a loosely coupled manner without physically connected to each other. A message middleware allows multiple consumers to receive messages from a single publisher, or a single consumer can send messages to multiple senders.

Messaging middleware is not a new concept; it has been used as a backbone for message communication between enterprise systems, as an integration pattern with various distributed systems in a unified way or inbuilt as part of the application server. In the context of IoT, the messaging middleware becomes a key capability providing a highly scalable, high-performance middleware to accommodate a vast number of ever-growing connected devices. Gartner, Inc. forecasts that 4.9 billion connected things will be in use in 2015 and will reach 25 billion by 2020.

The IoT messaging middleware platform needs to provide various device management aspects like registering the devices, enabling storage of device meta-model, secure connectivity for devices, storage of device data for specified interval and dashboards to view connected devices. The storage requirements imposed by an IoT platform is quite different from a traditional messaging platform as we are looking at terabytes of data from the connected devices and at the same time ensuring high performance and fault tolerance guarantees. The IoT messaging middleware platform typically holds the device data for the specified interval and in-turn a dedicated storage service is used to scale, compute and analyze information.

The device meta-model that we mentioned earlier is one of the key aspects for an Enterprise IoT application. A device meta-model can be visualized as a set of metadata about the device, parameters (input and output) and functions (send, receive) that a device may emit or consume. By designing the device meta-model as part of the IoT solution, you could create a generalized solution for each industry/verticals which abstracts out the dependency between data send by the devices and data used by the IoT platform and services that work on that data. You can even work on a virtual device using the device model and build and test the entire application, without physically connected the device. We would talk about the device meta-model in detail in the solution section.

All the capabilities of the IoT stack, starting from the messaging platform right up to the cognitive platform are typically made available as services over the cloud platform, which can be readily consumed to build IoT applications.

We would revisit the topic in detail in Chapter 3 when we talk about the IoT capabilities offered by popular cloud vendors and using open source software.

Data Storage

The data storage component deals with storage of continuous stream of data from devices. As mentioned earlier we are looking at a highly scalable storage service which can storage terabytes of data and enable faster retrieval of data. The data needs to be replicated across servers to ensure high availability and no single point of failure.

Typically a NoSQL database or high performance optimized storage is used for storing data. The design of data model and schema becomes a key to enable faster retrieval, perform computations and makes it easier for down processing systems, which use the data for analysis. For instance, if you are storing data from a connected car every minute, your data can be broken down in ids and values, the id field would not change for a connected car instance, but values would keep on changing – for instance like speed =60 km/hour, speed =65 km/hour, etc. In that case instead of storing key=value, you can store key=value1, value2 and key=timeint1, timeint2 and so on. The data represents a sequence of values for a specific attribute over a period of time. This concept is referred to as time series, and database, which supports these requirements, is called as Time Series database. You could use NoSQL databases like MongoDB or Cassandra and design your schema in a way that it is optimized for storing time series data and doing statistical computations. Not all use cases may require the use of time series database, but it is an important concept to keep in mind while designing IoT applications.

In the context of an enterprise IoT application, the data storage layer should also support storage and handling of unstructured and semi-structured information. The data from these sources (structured, unstructured and semi-structured) can be used to correlate and derive insights. For instance, information data from equipment manual (unstructured text of information) can be fed into the system, and sensor data from the connected equipment can be correlated with the equipment manuals for raising critical functional alerts and suggesting corrective measures. The IoT messaging middleware service usually provides a set of configurations to store the incoming data from the devices automatically into the specified storage service.

In a future article , I would talk about various storage service options provided by cloud providers for storing a massive amount of data from connected devices.

Data aggregation and filter

The IoT core platform deals with raw data coming from multiple devices, and not all data needs to be consumed and treated equally by your application. We talked about device gateway pattern earlier which can filter data before sending it over to the cloud platform. Your device gateway may not have the luxury and computation power to store and filter out volumes of data or be able to filter out all scenarios. In some solutions, the devices can directly connect to the platform without a device gateway. As part of your IoT application, you need to design this carefully as what data needs to be consumed and what data might not be relevant in that context. The data filter component could provide simple rules to complex conditions based on your data dependency graph to filter out the incoming data. The data mapper component is also used to convert raw data from the devices into an abstract data model which is used by rest of the components.

In some cases, you need to contextualize the device data with more information, like aggregating the current data from devices with existing asset management software to retrieve warranty information of physical devices or from a weather station for further analysis. That’s where the data aggregation components come in which allows to aggregate and enrich the incoming data. The aggregation component can also be part of the messaging stream processing framework that we will discuss in the next section, but instead of using complex flows for just aggregating information, this requirement could be easily handled without much overhead using simplified flows and custom coding, without using a stream processing infrastructure.

Analytics Platform Layer

The Analytics Platform layer provides a set of key capabilities to analyze large volumes of information, derive insights and enable applications to take required action.

Stream Processing

Real-time stream processing is about processing streams of data from devices (or any source) in real-time, analyze the information, do computations and trigger events for required actions. The stream processing infrastructure directly interacts with the IoT Messaging Middleware component by listening to specified topics. The stream processing infrastructure acts as a subscriber, which consumes messages (data from devices) arriving continuously at the IoT Messaging Middleware layer.

A typical requirement for stream processing software includes high scalability, handling a large volume of continuous data, provide fault tolerance and support for interactive queries in some form like using SQL queries which can act on the stream of data and trigger alerts if conditions are not met.

Most of the big data implementations started with Hadoop which supported only batch processing, but with the advent of various real-time streaming technologies and changing the requirement of dealing with massive amount of data in real-time, applications are now migrating to stream based technology that can handle data processing in real-time. Stream processing platform like Apache Spark Streaming enables you to write stream jobs to process streaming data using Spark API. It enables you to combine streams with batch and interactive queries. Projects already using existing Hadoop-based batch processing system can still take the benefit of real-time stream processing by combining the batch processing queries with stream based interactive queries offered by Spark Streaming.

The stream processing engine acts as a data processing backbone which can hand off streams of data to multiple other services simultaneously for parallel execution or to your own custom application to process the data. For instance, a stream processing instance can invoke a set of custom applications, one which can execute complex rules and other invoking a machine learning service.

Machine Learning

Following is the Wikipedia definition of Machine Learning –

“Machine learning explores the study and construction of algorithms that can learn from and make predictions on data.”

In simple terms, machine learning is how we make computers learn from data using various algorithms without explicitly programming it so that it can provide the required outcome – like classifying an email as spam or not spam or predicting a real estate price based on historical values and other environmental factors.

Machine learning types are typically classified into three broad categories

- Supervised learning – In this methodology we provide labeled data (input and desired output) and train the system to learn from it and predict outcomes. A classic example of supervised learning is your Facebook application automatically recognizing your friend’s photo based on your earlier tags or your email application recognizing spam automatically.

- Unsupervised learning – In this methodology, we don’t provide labeled data and leave it to algorithms to find hidden structure in unlabeled data. For instance, clustering similar news in one bucket or market segmentation of users are examples of unsupervised learning.

- Reinforcement learning – Reinforcement learning is about systems learning by interacting with the environment rather than being taught. For instance, a computer playing chess knows what it means to win or lose, but how to move forward in the game to win is learned over a period of time through interactions with the user.

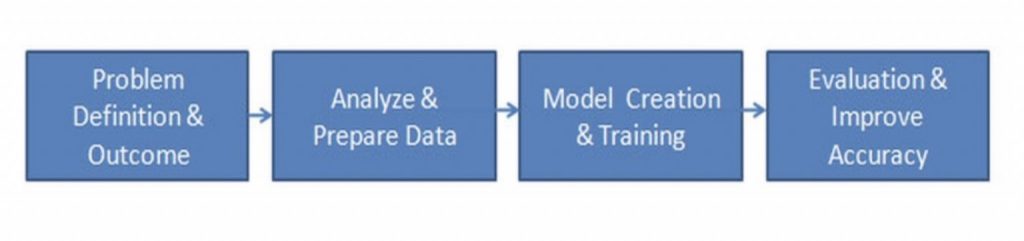

Machine learning process typically consists of 4 phases as shown in the figure below – understanding the problem definition and the expected business outcome, data cleansing, and analysis, model creation, training and evaluation. This is an iterative process where models are continuously refined to improve its accuracy.

From an IoT perspective, machine learning models are developed based on different industry vertical use cases. Some can be common across the stack like anomaly detection and some use case specific, like condition based maintenance and predictive maintenance for manufacturing related use cases.

Actionable Insights (Events & Reporting)

Actionable Insights, as part of Analytics Platform layer, are set of services that make it easier for invoking the required action based on the analyzed data. The action can trigger events; call external services or update reports and dashboards in real-time. For example, invoking a third party service using an HTTP/REST connector for creating a workflow order based on the outcome of the condition based maintenance service or invoking a single API for mobile push notification across mobile devices to notify maintenance events. Actionable insights can also be configured from real-time dashboards that enable you to create rules and actions that need to be triggered.

The service should also allow your application custom code to be executed to carry out desired functionality. Your custom code can be uploaded to the cloud or built using the runtimes provided and integrate with rest of the stack through the platform APIs.

Cognitive Platform Layer

Let’s first understand what is cognitive computing. Cognitive computing are systems that are designed to make computers think and learn like the human brain. Similar to an evolution of a human mind from a newborn to teenager to an adult, where new information is learned and existing information augmented, the cognitive system learns through the vast amount of information fed to it. Such a system is trained on a set of information or data so that it can understand the context and help in making informed decisions.

For example, if you look at any learning methodology, a human mind learns and understands the context. It can answer questions based on learnings and also make informed judgments based on prior experiences. Similarly, cognitive systems are modeled to learn from past set of reference data set (or learnings) and enable users to make informed decisions. Cognitive systems can be thought of as non-programming systems which learn through the set of information, training, interactions and a reference data set.

Cognitive systems in the context of IoT would play a key role in future. Imagine ten years down the line where every piece of system is connected to the internet and probably an integral part of everyday lives and information being shared continuously, how you would like to interact with these smart devices which surround you. It would be virtually talking to smart devices and devices responding to you based on your action and behavior.

A good example can be a connected car. As soon as you enter the car, it should recognize you automatically, adjust your car seats, start the car and start reading your priority emails. This is not programmed but learned over time. The car over a period of time should also provide recommendations on how to improve the mileage based on your driving patterns. In future, you should be able to speak to devices through tweets, spoken words, gestures and devices would be able to understand the context and respond accordingly. For instance, a smart device as part of connected home would react differently as compared to devices in a connected car.

For a connected home, a cognitive IoT system can learn from you, set things up for you based on your patterns and movements, be it waking you up at the right time, start your coffee vending machines, sending you a WhatsApp message to start the washing machine if you missed to start it based on your routine or take care of the home lighting system based on your family preferences. Imagine putting a smart controller and set of devices around your home, which observes you over a period of time and start making intelligent decisions on Day 10 and continuously learn from you and your family interactions.

From an implementation perspective, at a very high level, building a cognitive platform requires a combination of various technologies like machine learning, natural language processing, reinforcement learning, domain adoption through various techniques and algorithms apart from the IoT stack capabilities that we have discussed earlier. The cognitive platform layer should enable us to analyze structured, semi-structured and unstructured information, perform correlations, and derive insights.

This is one of the areas where we would see a lot of innovation and investment happening in future and would be a key differentiator for connected products and extension to one’s digital lives.

Solutions Layer

This section will discuss industrial or consumer applications built on top of the IoT stack leveraging the various services (messaging, streaming, machine learning, etc.) offered by the IoT stack.

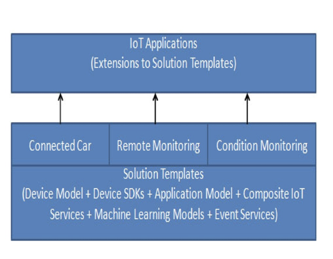

Solutions can be broken down into two parts – Solution Templates and Applications. The following diagram shows the concept of Solution Templates and Applications.

Solution Templates are a common set of services which are developed for specific or generalized use case that provides a head start to build IoT applications and is extended to build custom IoT applications. Building an IoT application requires a common set of tasks and services, configurations of components via dashboard or APIs and the entire process can be simplified and abstracted through the use of solution templates. The key component of a solution template is the abstract data model. The solution’s abstract data model is a combination of device data model that we discussed earlier in IoT Messaging Middleware section plus your application data model specific to that domain/industry/vertical use case. The services from the solution template use the abstract data model for communication, instead of directly dealing with data from sensors. The abstract data model is different for different industries. A connected car abstract data model would be different from connected-home, but if the abstract data model is properly envisioned and designed, it can provide the much-needed abstraction between devices and platforms.

As an example, for a connected car solution, the abstract data model could be a vehicle’s runtime data + GPS data + asset data of the vehicle, which constitutes the device meta-model and the application data model. The abstract data model then can be used for various other connected car use cases. It is more like creating a template that can be applied to different connected solutions within the same domain or industry. For example, a connected vehicle solution for sedan may be different for an SUV. But a common abstract data model, to some extent, gives the basic connected car functionality applicable to all connected cars irrespective of model or make. Some examples of solution templates could be remote monitoring, predictive maintenance or geospatial analysis.

One can use solution templates to build an application based on customer requirements. Please note a predictive maintenance requirement would be different for each industry, the devices, and equipment across manufacturing plants that needs to be connected would be different, data from devices would be totally different, the historical data would be different and lot of effort would be required to just connect and integrate the manufacturing equipment to the platform. However, the steps required building a predictive maintenance system should be pretty much the same. For instance, it would require working with existing asset management solutions to identify master data for equipment, connect to multiple data sources to extract, cleanse normalize, aggregate the data (for instance the data from historical database) and convert it to a form which can be consumed by services (the abstract model) and develop machine learning models which uses the abstract model to predict outcomes. All these common steps and services can be abstracted into a solution template and application provider can use this template for providing implementations or integrations based on the requirements.

Let’s take another example, a manufacturing company already knows what parameters are required for servicing an equipment, what information needs to be monitored, what is remaining lifecycle of the machinery (not on actual, but based on when it was bought) and what are the external factors (temperature, weather, etc.) which can cause a machine to possibly fail. This information can be easily turned into an abstract data model. Machine learning models can be developed and executed which uses this abstract data model to predict machinery failures. The only task remaining would be to map the data from the sensors to the abstract model. The abstract data model provides a generic abstraction of the data dependency between devices and the IoT platform.

However, existing platform and solutions in the market are far from the concept of building solutions templates, which build on the abstract data model due to multiple factors – heterogeneity of devices, domain expertise, and integration challenges. In future, not just the platform, but the solution templates and applications being offered would be a key differentiator between various IoT cloud offerings. An IoT cloud provider would enter into partnership with manufacturing unit and system integrators to build viable, generic and reusable solutions.

IoT Security and Management

Building and deploying an end-to-end enterprise IoT application is a complex process. There are multiple players involved (hardware providers, embedded device manufacturers, network providers, platform providers, solution providers, system integrators) which increase the complexity of integration, security, and management. To address the complexity holistically, an enterprise IoT stack needs to provide a set of capabilities which would ease the overall process and take care of end to end cross cutting concerns like security and performance. In this section, we will talk about key aspects at a minimum, which should be addressed by an enterprise IoT stack.

Device Management

Device management includes aspects like device registration, secure device provisioning and access from device to cloud platform and cloud platform to device, monitoring and administration, troubleshooting and pushing firmware and software updates to devices including gateway devices. A device gateway might also include local data storage and data filter component as discussed earlier, which needs to be updated in case of new versions or support patches.

An enterprise IoT platform should provide administration console and/or APIs to allow devices to register on the platform securely. The device registration capability should allow fine-grained access and permissions on what operations the device can carry out in the context of that application. Some devices may require only one-way communication from cloud to device for handling firmware updates and some others would need a bidirectional communication like gateways. Some devices may only have read access while some device can post messages to the platform. The security aspects should provide configuration related security as well as security over the specified protocol. For instance, even if an intruder gets access to the devices, the intruder may not be able to post the messages to the platform. Device SDKs should provide libraries that are safer to access thereby ensuring that there is no vulnerability in the communication process.

Monitoring and administration

Monitoring and administration are about managing the lifecycle of the device. The lifecycle operations include register, start, pause, stop activities and the ability to trigger events/commands to and from devices. A pause state could be a valid requirement in use cases like a health tracking devices as opposed to a connected car. The ability to add custom states based on the requirements should be a part of the monitoring and administration capabilities. Monitoring should also capture various parameters to help troubleshoot devices like device make, software installed, library installed, last connected date, last data sent, storage available, current status, etc. For instance, a device gateway may have stopped functioning due to it running out of storage space. This could happen if the remote synchronization service was not running to transfer out the storage data from the device and clear the space. Monitoring can also identify suspicious activity, and therefore needs a mechanism to address it.

Lastly, the device management should provide capabilities to update the firmware, software and dependent libraries on the devices securely through administrative commands or auto-update features. Deploying device updates across millions of devices still needs to be solved at large. In next section, we would talk about various deployment options.

Deployment

Deployment of IoT applications needs to be looked at holistically, right from IoT devices, networks, and topology, cloud services and end solutions and taking care of end-to-end security. We are already seeing a lot of partnership in this space, where device manufacturers are partnering with cloud providers that enable devices to register with the cloud provider in a secured way. The deployment and management of devices is an area that needs a lot of attention and innovations and we feel the next investments would happen in this space. This would include providing an end to end set of tools and environment to design and simulate connected products, deployment, and management of millions of devices, using docker images for device updates, testing network topologies to services and solutions which build up the IoT application.

This completes our architecture series. In the next article, we will look at Applications of IoT in various industries